Remember the Aesop’s fable about the Tortoise and the Hare? 11,002,798 viewers as of 9 AM Central European Time April 10, 2012. Since April 4, 2012 Noon Pacific Time, in five and a half days, over the 2012 Easter holiday weekend, the YouTube “vision video” of Google’s Project Glass has probably set a benchmark in terms of how quickly a short, exciting video depicting a cool idea can spread through modern, Internet-connected society. [update April 12, 2012: here’s an analysis of what the New Media Index found in the social media “storm” around Project Glass.]

The popularity of the video (and the Project Glass Google+ page with 187,000 followers) certainly demonstrates that beyond a few hundred thousand digerati who follow technology trends, there’s a keen interest in alternative ways of displaying digital information. Who are these 11M viewers? Does YouTube have a way to display the geo-location of where the hits originate?

Although the concepts shown in the video aren’t entirely new, the digerati are responding and engaging passionately with the concept of handsfree, wearable computing displays. I’ve seen (visited) no fewer than 50 blog posts on the subject of the Project Glass. Most are simply reporting on the appearance of the concept video and asking if it could be possible. There are those who have invested a little more thought.

Blair MacIntyre was one of the first to jump in with his critical assessment less than a day after the announcement. He fears that success (“winning the race”) to new computing experiences will be compromised by Google going too quickly when slow, methodical work will lead to a more certain outcome. Based on the research in Blair’s lab and those of colleagues around the world, Blair knows that the state-of-the-art on many of the core technologies necessary for this Project Glass vision to be real is too primitive to deliver (reliably in the next year) the concepts shown in the video. He fears that by setting the bar as high as the Project Glass video has, expectations will be set too high and failure to deliver will create a generation of skeptics. The “finish line” for all those who envisage a day when information is contextual and delivered in a more intuitive manner will move further out.

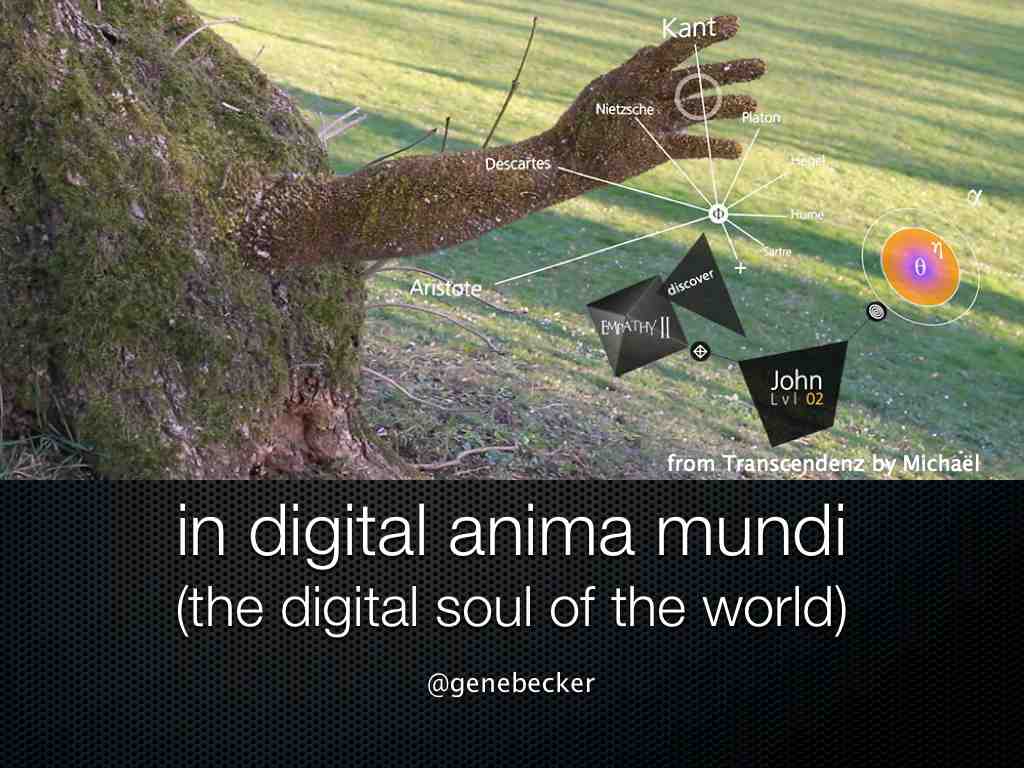

In a similar “not too fast” vein, my favorite post (so far, we are still less than a week into this) is Gene Becker‘s April 6 post (48 hours after announcement) on his The Connected World blog. Gene shares my fascination with the possibility that head-mounted sensors like those proposed for Project Glass would lead to continuous life capture. Continuous life capture has been shown for years (Gordon Bell has spent his entire career exploring it and wrote Total Recall, other technologies are actively being developed in projects such as SenseCam) but we’ve not had all the right components in the right place at the right price. Gene focuses on the potential for participatory media applications. I prefer to focus on the Anticipatory services that could be furnished to users of such devices.

It’s not explicitly mentioned, but Gene points out something I’ve raised and this is my contribution to the discuss about Project Glass with this post: think about the user inputs to control the system. More than my fingertips, more of the human body (e.g., voice, gesture) will be necessary to control a hands-free information capture, display and control system. Gene writes “Glass will need an interaction language. What are the hands-free equivalents of select, click, scroll, drag, pinch, swipe, copy/paste, show/hide and quit? How does the system differentiate between an interface command and a nod, a word, a glance meant for a friend?”

All movement away from keyboards and mice as input and user interface devices will need a new interaction language.

The success of personal computing in some way leveraged a century of experience with the typewriter keyboard to which a mouse and graphical (2D) user interface were late (recent) but fundamental additions. The success of using sensors on the body and in the real world, and the objects and places as interaction (and display) surfaces for the data will rely on our intelligent use of more of our own senses, use of many more metaphors between the physical and digital world, and highly flexible, multi-modal and open platforms.

Is it appropriate for Google to define its own handsfree information interaction language? I understand that the Kinect camera point of view is 180 degree different from that of a head-mounted device, and it is a depth camera, not a simple and small camera on the Project Glass device but what can we reuse and learn from Kinect? Who else should participate? How many failures before we get this one right? How can a community of experts and users be involved in innovating around and contributing to this important element of our future information and communication platforms?

I’m not suggesting that 2012 is the best or the right time to be codifying and to put standards around voice and/or gesture interfaces but rather recommending that when Project Glass comes out with a first product, it should include an open interface permitting developers to explore different strategies for controlling information. Google should offer open APIs for interactions, at least to research labs and qualified developers in the same manner that Microsoft has with Kinect, as soon as possible.

If Google is the hasty hare, as Blair suggests, is Microsoft the “tortoise” in the journey to provide handsfree interaction? What is Apple working on and will it behave like the tortoise?

Regardless the order of entry of the big technology players, there will be many others who notice the attention Project Glass has received. The dialog on a myriad of open issues surrounding the new information delivery paradigm is very valuable. I hope the Project Glass doesn’t release too soon but with virtually all the posts I’ve read closing by asking when the blogger can get their hands on and nose under a pair, the pressure to reach the first metaphorical finish line must be enormous.